Why AI Strategy Is Ultimately a Leadership Problem

Before generative AI entered the mainstream, I led transformations across several insurance companies where automation of the claims process was a central element. What became clear early on was not a limitation of technology, but a leadership reality: fully automated decision-making was neither realistic nor desirable.

Manual checks were not a technological compromise. They were a conscious leadership choice. We didn’t ask, “Can we automate this?” We asked, “Where does automation create value? And where does it introduce unacceptable risk?”

These questions has only become more important in the age of generative AI.

When technical capability outpaces business judgment

One misconception in AI adoption is the belief that technical feasibility implies strategic wisdom. It does not! Effective AI strategy is never purely technical. It sits at the intersection of economics, organizational design, risk, and human judgment.

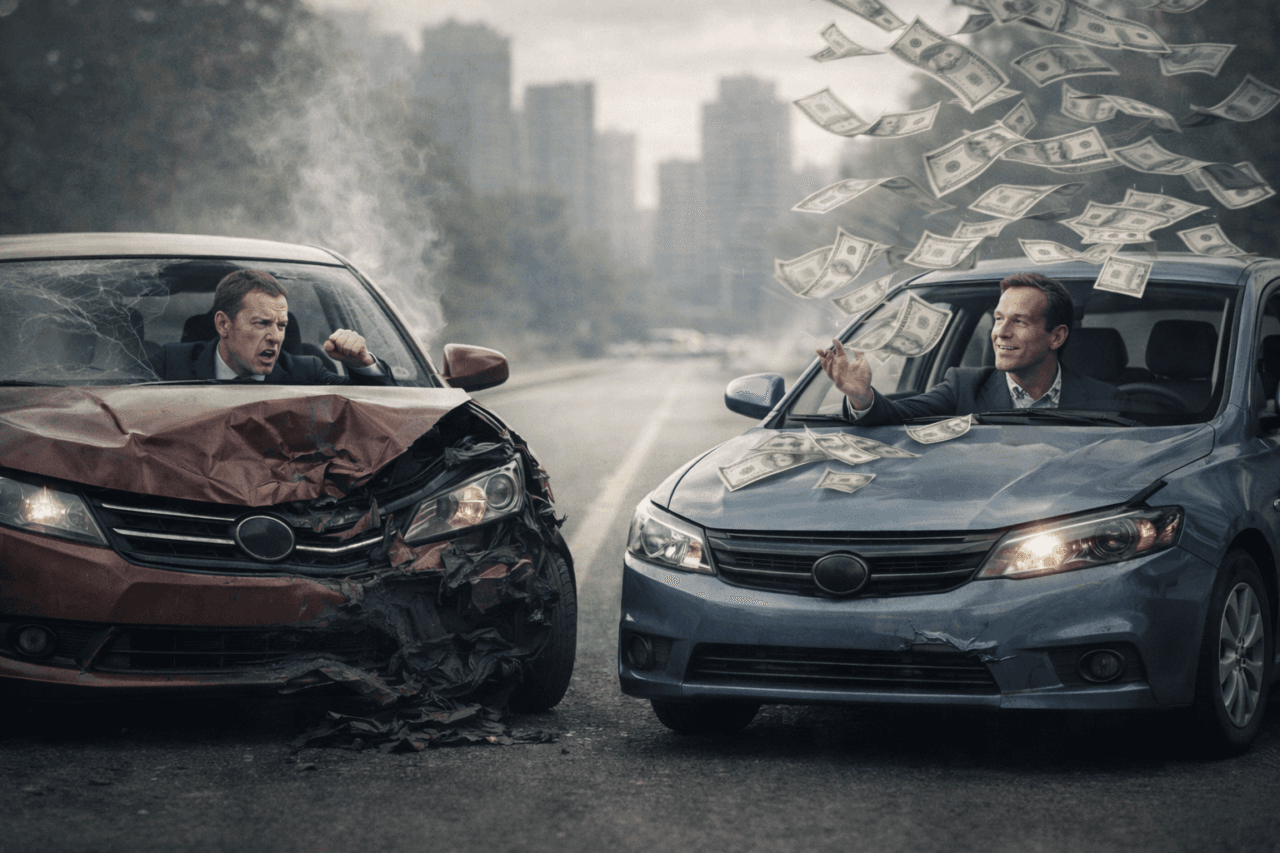

A well-known example from motor insurance illustrates this tension. An insurer explored fully automated claims handling using image recognition: customers uploaded photos, AI assessed the damage, and payouts were calculated automatically.

From a technical standpoint, the system performed well. From a business standpoint, it did not.

In claims processing, errors are costly in both directions. Overpayments quietly erode margins. Underpayments trigger disputes, external assessments, and often higher final costs. Even a small error rate proved economically unacceptable. The system’s accuracy was high, but not high enough for the decision it was asked to make.

The solution was not better models, but better leadership design: partial automation. AI supported the process by pre-filling assessments; humans retained final accountability. Processing times dropped dramatically, productivity increased, and overall costs declined.

The lesson was not about AI capability. It was about decision architecture.

Why AI cannot be managed like traditional IT

A critical leadership mistake is treating AI deployment as a conventional IT project.

Traditional IT systems behave predictably within defined boundaries. AI systems do not. They are probabilistic, imperfect even in their core domain, and degrade gradually outside it. This creates a long tail of plausible but unreliable outputs that must be actively managed.

This is why planning-heavy, waterfall-style approaches struggle. As Clay Shirky famously observed, waterfall effectively amounts to a pledge not to learn while doing the work. With generative AI, learning is the work.

Organizations that succeed take a different approach:

- They design for learning, not perfectionAI scaling laws mean that the last increments of accuracy are disproportionately expensive. Leaders must decide where “good enough plus human judgment” creates more value than technical optimization.

- They default to partial automation in high-risk domainsIn areas like insurance, finance, and healthcare, accountability cannot be delegated to a model. Hybrid designs outperform full automation both economically and reputationally.

- They adopt agile deployment modelsInstead of grand AI strategies, they start with minimum viable plans: identifying where AI can realistically accelerate work, running focused experiments, monitoring outcomes closely, and iterating continuously.

- They build feedback and correction into the systemMonitoring, transparency, and clear escalation paths are not optional add-ons. They are core components of responsible AI use.

The leadership takeaway

AI often fails when leaders confuse technical capability with organizational readiness.

The organizations that succeed with AI will not be those that automate the most, nor those that wait for certainty. They will be the ones that learn the fastest, organizationally, economically, and ethically.

AI strategy is therefore ultimately a leadership problem.

Image: Fittingly generated by AI.